Popular

C++

Unreal

unrealengine

Vulkan

OpenSource

southwestchangeflight

VolarisCambiodevuelo

DeltaSeatSelection

FrontierChangeFlight

Developer Blogs

Forum Discussion

Started by theish

4 hours, 51 minutes ago

Started by VChuckShunA

20 hours, 22 minutes ago

Started by MagicRainStudios

23 hours, 17 minutes ago

Started by Nagle

2 days, 8 hours ago

Game Developer News

zephyr3d v0.4.0 Released - 3D rendering framework for WebGL & WebGPU

April 21, 2024 03:46 PM

NeoAxis Game Engine 2024.1 Released

April 18, 2024 03:01 PM

Khronos Releases OpenXR 1.1 to Further Streamline Cross-Platform XR Development

April 15, 2024 01:47 PM

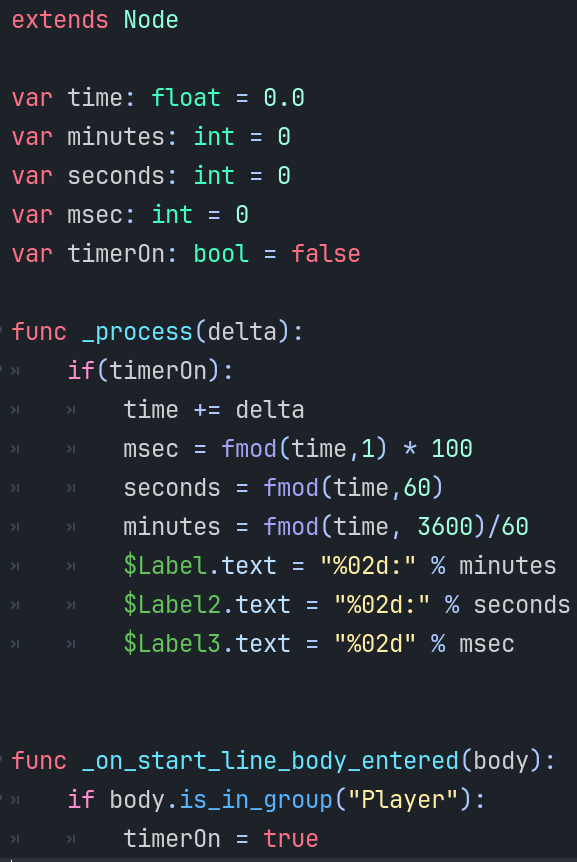

Feature Tutorials

Latest GameDev Projects

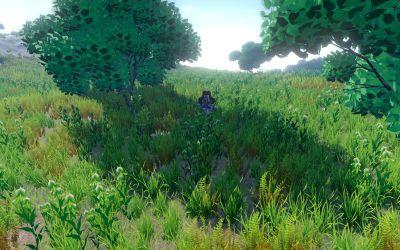

RPG, Farm and Nature Simulation Game with a deep story and complex new mechanics

Loading...

Finding the llamas...

You must login to follow content. Don't have an account? Sign up!

Advertisement

Top Members

Advertisement

Popular Blogs

Retro Grade

33 entries

cyberspace009's Journal

8 entries

Flappy Assassin is now available

5 entries

Dave, The Mystical Workings Of...

29 entries

The truth between the lies

7 entries