Nothing super spectacular, well actually it is for me, but it doesn't look it.... Found a major bug of sorts in my dungeon generation algorithmn. it refuses to put corridoors were I want them. On top the fact life has been throwing me curve balls left and right, I'm sparse on time.

My factory implem…

My factory implem…

Unfortunately no video, the view component is my next big hurdle though.

DUNGEON:

I've been putting most my effort into getting the dungeon generator up and running before getting things on the screen. The dungeon is a container the holds a series of floors. Each floor contains a terrain, monsters an…

DUNGEON:

I've been putting most my effort into getting the dungeon generator up and running before getting things on the screen. The dungeon is a container the holds a series of floors. Each floor contains a terrain, monsters an…

Dungeon Crawl Stone Soup

I finally crawled back into the world of RougeLikes, I could never bring myself to play any ASCII version consistantly. I've been hooked on 'Dungeon Crawl Stone Soup'. Which has a graphical version (tiles) aswell and is updated quite alot.

The mechanics are similar to D&D…

I finally crawled back into the world of RougeLikes, I could never bring myself to play any ASCII version consistantly. I've been hooked on 'Dungeon Crawl Stone Soup'. Which has a graphical version (tiles) aswell and is updated quite alot.

The mechanics are similar to D&D…

A man of few words, but the attention whore inside me has been waiting for over a month now. Here goes

Design and learn by prototyping.

Prototyping I've found to be a great exercise in teaching myself to pump out better code, faster. If only I could find some time to be less tired and motivated to…

Design and learn by prototyping.

Prototyping I've found to be a great exercise in teaching myself to pump out better code, faster. If only I could find some time to be less tired and motivated to…

Busy busy this man is. Well as usual I'm very good destroying hardware... but keep it to yourself. rawr, servers beware, the cursed one is here. I actually went computer less for a couple months. Stuck to pen and paper to flush out my ideas and phone for surfing the 1's ad 0's.

As I last left off I …

As I last left off I …

Pitter patter goes the heart. Something I forget so often, and I've noticed it amongst my peers. Not taking care of my self, no I'm not talking about that you perv. I find my self so caught up in the minds of others, that I don't think about how I feel. It's been almost two, weeks and that's exactl…

I was listening to this song earlier and it just had this great verse in it.

Cause you

You're so calm

I don't know where you are from

You

You're so young

I don't care what you've done wrong

Too me it screams the essence of childhood, being an adult and whatching children grow up. To not take the world so …

Cause you

You're so calm

I don't know where you are from

You

You're so young

I don't care what you've done wrong

Too me it screams the essence of childhood, being an adult and whatching children grow up. To not take the world so …

Gotta love how easily kids pass along the sickness. I'm a walking disease today, no work, don't want to hang out in fear I'm may be too far from a bathroom ;) So here I am, clean or code... let's code. While were at it lets toot my horn aswell. Yes there is more than one in this head.

Wasn't expecti…

Wasn't expecti…

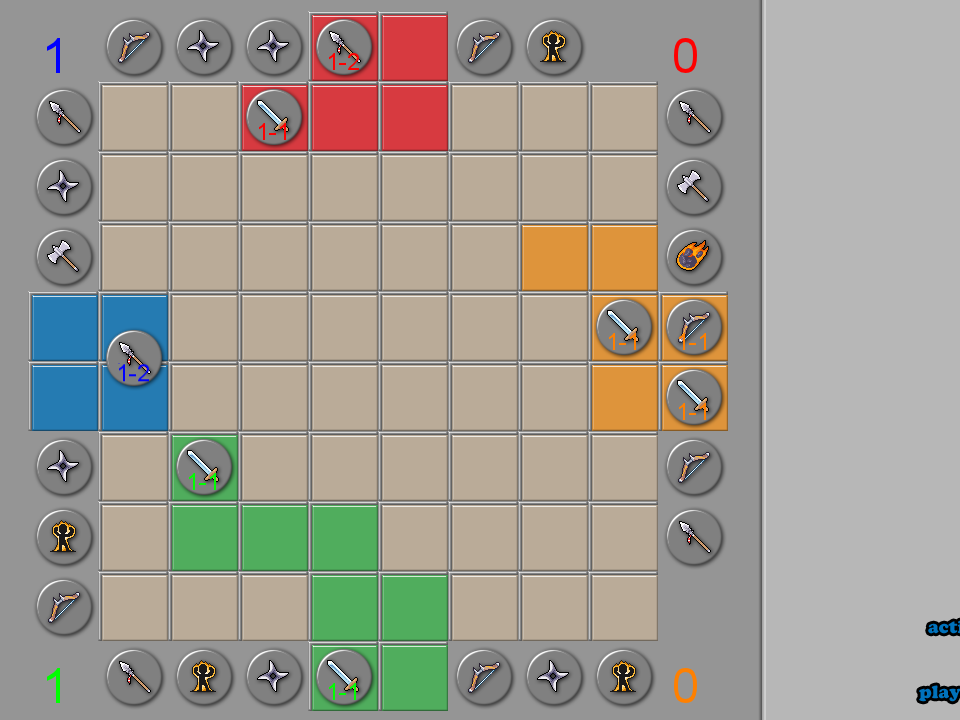

Later in the week, next week, same thing. Right?.... right? Real simple real quick, get the highest score possible and brag to your self about it, cause that's what life is all about. Right?

Playing the game - You're given a set amount of turns, each time you reveal a colored square it takes a turn …

Playing the game - You're given a set amount of turns, each time you reveal a colored square it takes a turn …

~$2,000 and my truck is finally running after 2-3 months, gonna need a $400 fuel pump soon too... uh. ~$200 bucks and I finally got my pc back to normal, but had to reinstall everything. Thing locked up to the point I couldn't get anything off it... good thing I happened to backup my projects two w…

Just a quick update on the new conquest game.

Achievements

-- Updated and filled missing documentation for every header within the project. Only game specific headers.

-- Implemented base instances of all phases (upkeep, first setup, pre action, post action, second setup, and cleanup).

-- Identified se…

Achievements

-- Updated and filled missing documentation for every header within the project. Only game specific headers.

-- Implemented base instances of all phases (upkeep, first setup, pre action, post action, second setup, and cleanup).

-- Identified se…

No kids for the weekend... already feels weird. Plus no Sunday magic... and most everyone is with family for the holidays... ah what to do with my time. Well I did some hardcore programming to pass the time. Accessing some of my achievements and future milestones for the project, here's a short ver…

That's right.... What? Delivered freight, from 8am - 2pm, lunch, nap, stocked freight from 6pm - 11:30 pm. Put my clothes back on and left what easily could've been a models hotel room at 3 am. Somehow made it home to sleep. did it all over again at 8am. Yet I still have no money to show for it....…

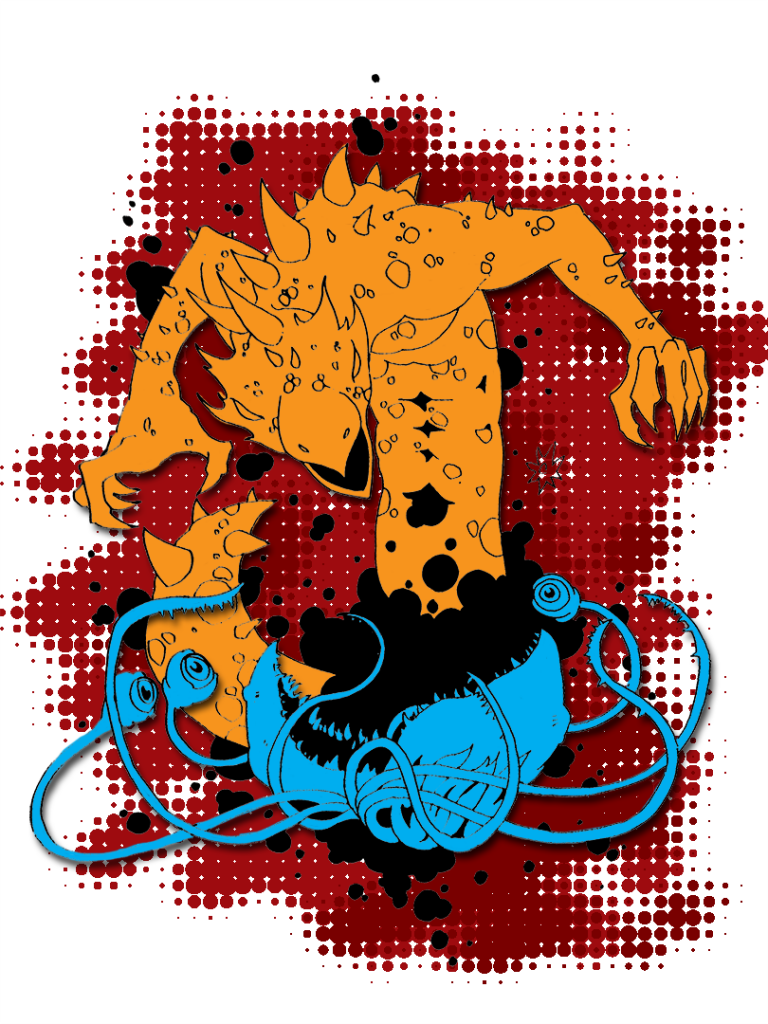

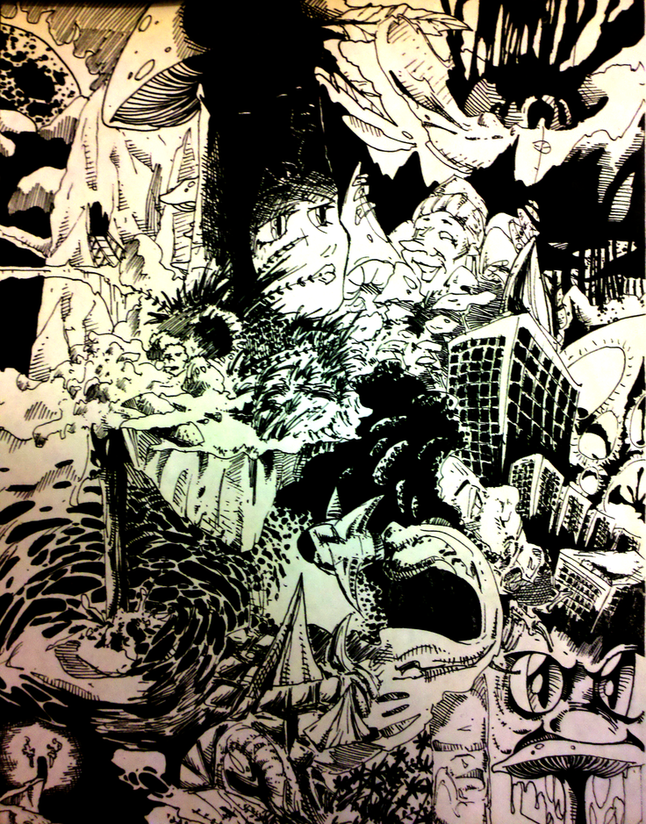

A turn based strategy game with card game mechanics, *sigh* I know... exciting. I've had this on the back burner for a long time and just never brought it to light. Just messing around with art some more I redid all the original 1st grader art. Not really a huge improvement, but atleast it has cons…

Oh bethseda you do love putting out fun and buggy games. They definitely took alot of the good things from the fallout games when it comes to the controls. Oblivion always seemed so hard to gather/collect stuff to the point I usually only picked up things that looked worth it. I love the fact that …

*edit* oops forgot the video... I really was that tired.

Yeah, so only 4 and half hours to be able to sleep last nite. For the sake of not sleeping in a gain and getting yelled at for it.. I once again opted to stay up well, unfortunately I couldn't focus enough to get anything done. 36 hours later …

Yeah, so only 4 and half hours to be able to sleep last nite. For the sake of not sleeping in a gain and getting yelled at for it.. I once again opted to stay up well, unfortunately I couldn't focus enough to get anything done. 36 hours later …

So I pull the switch, the switch, the switch inside my head.

And I see black, black, green,and brown, brown, brown and blue, yellow, violets, red.

And suddenly a light appears inside my brain

Back to coding along with the hundred other projects going on in my life. Been several weeks if not a month or…

And I see black, black, green,and brown, brown, brown and blue, yellow, violets, red.

And suddenly a light appears inside my brain

Back to coding along with the hundred other projects going on in my life. Been several weeks if not a month or…

moist...? Messing around with the water simulation in the ecosim. Trying to get it to flow over the height map properly. I think my values are just off scale or I'm still not getting the rules right.

Currently this is the rules governing how water flows.

For each Cell;

-- if Cell water > threshold

-…

Currently this is the rules governing how water flows.

For each Cell;

-- if Cell water > threshold

-…

Funny how a smile can lift your spirits. I just had an idea for mixing, tower defense with card game mechanics. Kind'of a more dynamic tower defense. If you've ever played plants ves zombies, you'll know what a row based tower defense game looks like. Well imagine that turned vertical. So there is …

Gain again what they want to steal. Lack of motivation, fear or just straight up laziness.The hardest thing in life has got to be starting. On the low end of bi-polar everything feels like sitting in a race car with no gas. Everything is cool-n-all but it just never goes anywhere.

I've overwhelmed m…

I've overwhelmed m…

huh? Were you been?

Joyless and frustrated, for two months, dealing with four letter expletives and the people that start it. No offense but why do all women have to be ignorant, or uninterested? Why do people have to be so vague? I'm trying not to get angry, but I really want to break something. i …

Joyless and frustrated, for two months, dealing with four letter expletives and the people that start it. No offense but why do all women have to be ignorant, or uninterested? Why do people have to be so vague? I'm trying not to get angry, but I really want to break something. i …

added cloud forming to the ecomimic, the conditions for evaporation and rainfall aren't active, so what you're seeing the effect that wind has on the clouds. The second part, with the green. That is the atmospheric pressure which simulates the wind, the last section is the air temperature being alt…

There's vegetation, represented by the green dots. but there's no discerning between what types of vegetation they represent. So what should define a plant? So far the basics are;

- waterThreshold - how wet the soil can be before it's starts to die off

- nutrientRequirement - how many nutrients it needs …

Still alive just not awake. Still looking for an artist, for Kylar's Valcano. In the meant time I've been messing around with Cellular Automation . Trying to make a simple ecosystem like the old simearth.

What's happening you might ask, well let me tell ya. The shades of brown represent how fertile…

What's happening you might ask, well let me tell ya. The shades of brown represent how fertile…

Advertisement

Popular Blogs

Advertisement