I thought of limiting the comma between players and host by changing how often they receive updates. If the screen is 2000 pixels wide and a character takes up the whole screen at 1 meter then at 1/(2000/(d)) it will only have a pixel of change for a meter of movement so if the maximum speed is 1…

![Cloud gaming, ad revenue system, higher dimensional grid [edit]](https://uploads.gamedev.net/profile/photo-43550.png)

Some more time for ideas.

You could use Amazon AWS EC2 instance or other cloud platforms to make a cloud gaming platform and recruit resources on demand. In steam and other cloud platforms it's possible to steal and crack the game code and logic and thus pirate the game for free. If th…

![not ideas [edit]](https://uploads.gamedev.net/profile/photo-43550.png)

Actually I don't care about discussing this.

So I guess I'm going to mix the fun of a break I was going to take with actually trying to make money. I don't actually need to make money. I just need to be trying to make money. And I have a load of better things to be doing than games and anime…

![Ideas [edit]](https://uploads.gamedev.net/profile/photo-43550.png)

I think I have to talk about everything on my mind and go into detail into the ideas everywhere because maybe the mental disconnect I feel is because I'm mulling this over by myself and there's a disconnect in communication or contact with reality. Also I thought maybe actually making games I wa…

I actually have to get a job now because I'm not officially making money, even though it's ridiculous and I should be getting paid for the useful physics work that I do. All these people in universities getting taught this ridiculous stuff that they take so seriously that if they even tried a lit…

![subfield [edit3]](https://uploads.gamedev.net/profile/photo-43550.png)

I'm kind of not sure if working on this takes too much of a mental commit charge as opposed to working on the useful physics work that I do. I didn't sleep though so this is not like I'm capable of doing anything besides mental work anyway. I think in the day today I might be able to do some wor…

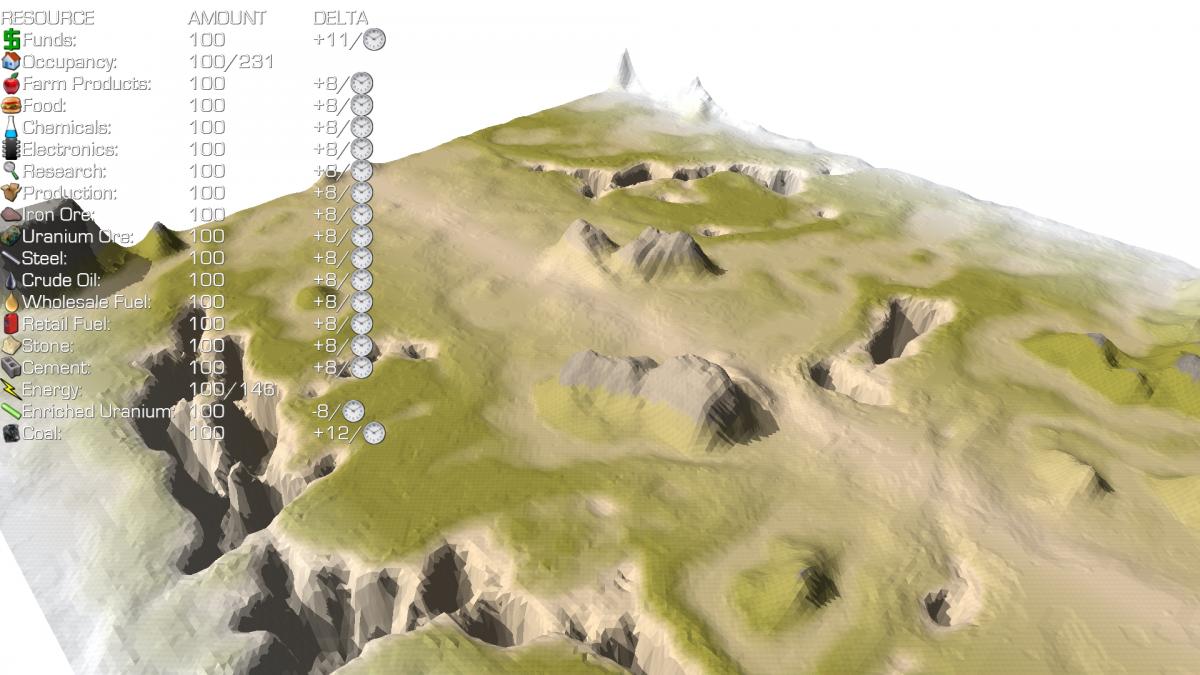

Here is how how it is currently going.

https://github.com/dmdware/dopamine

Requires Windows 8.1 to test

https://drive.google.com/open?id=0B2Wuir9-DSiUcVktWWttUm9IVXM

So this has to have some kind of kick or purpose beyond being just a game. So here's an idea for what was originally going to be "isometric forum" but now is "subfield" the physics lecture hall. Basically you're a student and somebody's giving a lecture. You have all the controls of an FPS. You …

I'm just happy wracking up screenshots and pieces of art on my DeviantArt, which I get enjoyment out of. That is, I'm saying this because, you may be wondering why, the whole giant game is being made at all. I looked through so many, well, a few, new pieces of mecha art I saw. It feels like whoev…

This is another idea I had. Basically imagine if you need to keep a list of billions of IP address (32 bits each) visitors to your site or worse IPv6 (256 bits each?) And if you had each IPv4 address you would get a total of 4+ GB. What if I told you you can reduce that to 400 MB?

The amount…

![Higher dimensions [edit]](https://uploads.gamedev.net/monthly_2017_06/IMG_4843.thumb.png.9d930f0efdd3a0305e7f22c201b73a26.png)

So I think it's about time I talk about that secret super-duper technique I was going to talk about, but said I would post elsewhere first, because it was so good. I'm already satisfied with how things are going (in other areas), so I don't mind posting it. I made all these screenshots and metho…

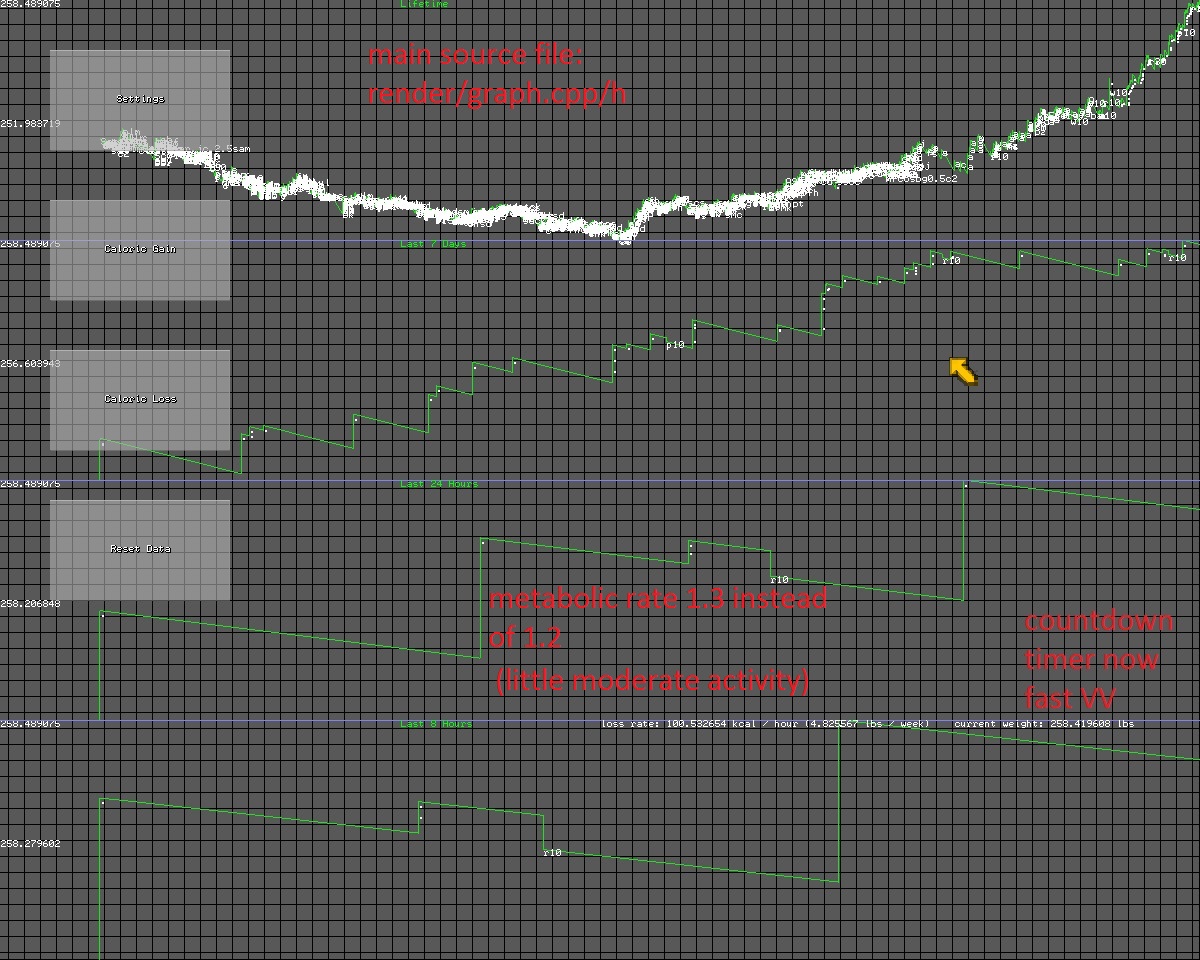

Also, here is the code for the caloric metabolic graph:

https://github.com/dmdware/fg2

And "testfolder" with exe:

https://drive.google.com/file/d/0B2Wuir9-DSiUN0FYbTZ1NGljU0E/view?usp=sharing

You can ignore the copyright / license I have there, if you make an improved version and sell it or make it fre…

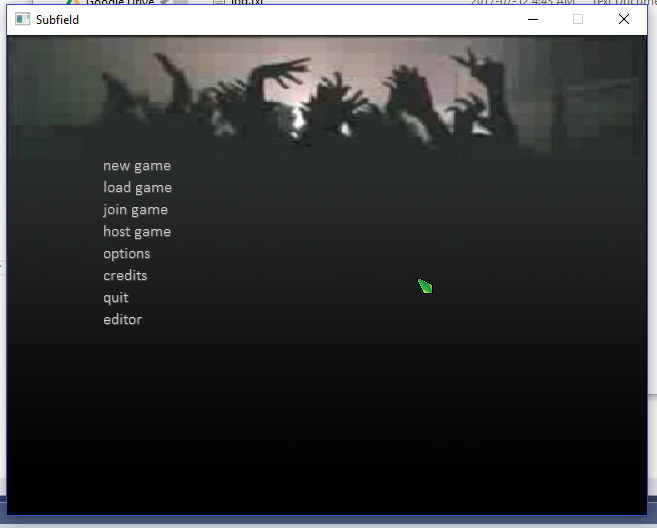

Also found this later version of the zombie shooter.

https://drive.google.com/file/d/0B2Wuir9-DSiUY2V5MnY1ZmF5TTQ/view?usp=sharing

This one has shooting, reload, jumping, crouching, moving boxes, etc, with sound effects. Click F1 to toggle between third and first person view.

This one here is somethin…

Here is what I made to track pixels between frames for the 5-point algorithm that I intended to use for scene reconstruction.

https://github.com/dmdware/srt

The first result I had with the tracking was strangely similar to a map of surface normals. I don't remember how it happened, but I think it was…

Just another small topic. With today's sub-200 ms lag, it may be possible to make a lockstep protocol FPS, where there wouldn't be any advantage to having a faster computer or internet connection, so that people with faster computers or faster internet won't get head-shots or escape from fire while…

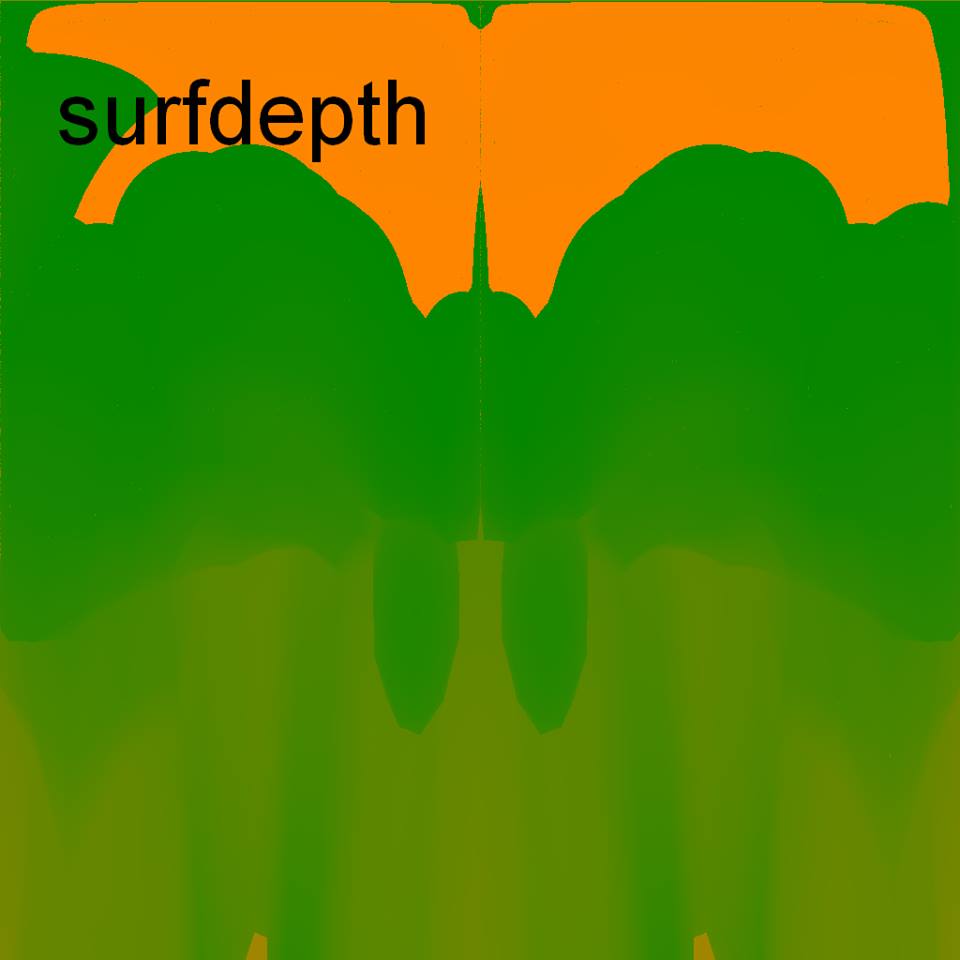

I will also briefly describe how the orientability maps are generated and how they are used.

http://store.steampowered.com/app/468900/3D_Sprite_Renderer/

http://steamcommunity.com/games/468900/announcements/detail/246974483987671222

http://steamcommunity.com/games/468900/announcements/detail/246972580…

![neuro-hash [edit4]](https://uploads.gamedev.net/blogs/monthly_05_2017/blogentry-43550-0-62055700-1496028163.png)

This is probably the last big idea I had, if I can't dig up others.

It started here: https://www.gamedev.net/topic/676377-local-hash/

Since then, I developed it more:

https://github.com/dmdware/lm15

https://github.com/dmdware/lm16

[edit] The "pencil" source code is now availab…

![asdfasfd [edit4]](https://uploads.gamedev.net/blogs/monthly_05_2017/blogentry-43550-0-30844800-1495950415.jpg)

I have some more time for ideas. This one doesn't require writing, just mostly copy-pasting.

This is an idea for a zombie shooter, possibly a novel or a (computer-generated or trash-snipped-together-from-random-Google-images?) movie now.

The original game exe is here, for the earlier version:

![sfdgdsfgsd [correction2] [edit]](https://uploads.gamedev.net/blogs/monthly_05_2017/blogentry-43550-0-57879500-1495901562.png)

The idea is to use the Structure Sensor from Occipital (or an ordinary smartphone with SceneLib), to record the depths and colors of some scene, with readings of the accelerometers and gravitometers, etc. recorded, along with video with sound and color. Then using the orientation of the camera on t…

![Next topic [edit]](https://uploads.gamedev.net/profile/photo-43550.png)

I was going to write up about the next topic tomorrow but now that I've thought about it, I realize I better not, until I publish it somewhere else, because it's just so awesome. So you'll have to wait. A few months. In the meanwhile I will talk about other ideas. And the game idea that it is for i…

![new primes [edit]](https://uploads.gamedev.net/blogs/monthly_05_2017/blogentry-43550-0-78464400-1495865481.jpg)

For the next game idea, I'm going to have to explain two new things in two entries.

I came up with an idea of "cryptographic" like resources that are generated by computing power. Basically, they are like primes, and they can be traded, for example, if one player or factory doesn't have them, they c…

With the addition of accelerometers, gravitometers, magnetometers, cameras, LED beams, GPS tracking and navigation, flashlights, internet, calling, and making smartphones pretty much computers, are smartphones not the Swiss army knife of devices? With the addition of perhaps laser pointing, directi…

![directional bluetooth [edit4]](https://uploads.gamedev.net/profile/photo-43550.png)

If you're interested in my art that I first posted, I have a DeviantArt account here: http://polyfrag.deviantart.com

So the next idea I have is, what if the smartphone had 3 or so "mini-RADARs"? Ie, they would rotate inside the phone, and you could use it to orient yourself with other devices or cel…

![isometric shooters [edit4]](https://uploads.gamedev.net/blogs/monthly_05_2017/blogentry-43550-0-09759200-1495774616.png)

This is another idea I had with several variations. The basic idea is that you control a character, perhaps made of an upper and lower pre-rendered sprite with 8+ angles of yaw and pitch, and you have a crosshair in front that is 3-dimensional. You would use a projection of 3-dimensional coordinate…

[Don't know why this became a draft yesterday] Imagine if you're passing by on the street in a dense city, or sitting in an apartment building, or riding in some part of the city, and your smartphone is exchanging files via bluetooth and/or wifi-direct (piracy? new bit-torrent?). It would be like a…

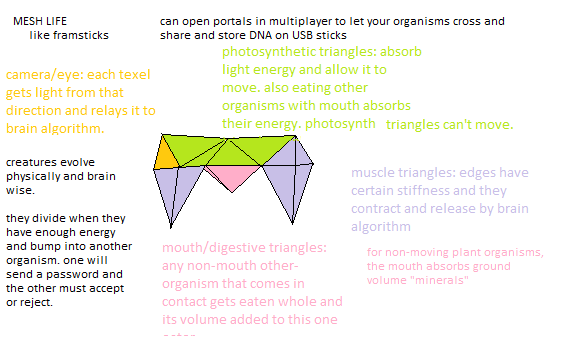

Here is another idea I had. http://23.226.230.153/proj1/ml/ It would've used simplified physics, eg, for rotations and rotational response to different interpretation with the ground or other meshlife being line intersections or polygonal volumes, it would for example gotten the average point or ca…

The PS Vita has a nice design, and nice buttons and controls, don't you think? I wouldn't mind if it was running some linux/chrome/android-based operating system that I could develop on, and use for general productivity, and as a smartphone, instead of my smartphone right now. I was thinking how I …

![f1, fg2 [reinstatement]](https://uploads.gamedev.net/blogs/monthly_05_2017/blogentry-43550-0-00185100-1495607234.jpg)

[Afterword: I'm reinstating these entries because I was working on a 200-page something-or-other, and didn't realize I could split it up. How exactly is that related? Well, I'm not going to go into details. So just never mind.]

Hello, it's me... I have stopped working on games pretty much, and now a…