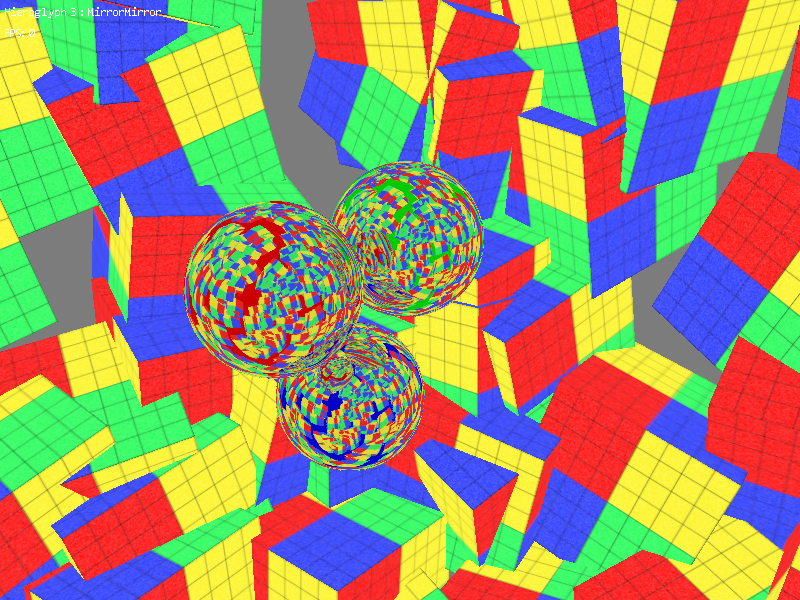

Since each reflective sphere requires the scene to be rendered for its paraboloid map, the sample effectively renders the scene a total of four times - once for each sphere (both paraboloid maps are generated simultaneously) and then once to render the final scene. Thus if I specify 200 objects floating around the spheres, then you end up performing approximately 800 draw calls over four rendering passes. This is effectively the best use case for parallel rendering - the work loads are more or less evenly distributed over four threads, and there is a corresponding speed boost when using multi-threaded as opposed to single threaded rendering.

However, when I did my test I found that the frame rate in debug mode had dropped from somewhere around 70 to ~9. After going back through my source code tree, I found that before some recent changes to how I handle the input assembler stage's state the performance was as expected. This seemed really strange, since the new state management actually should have been more or less equivalent to the old method.

To further investigate, I stepped through the drawing operation with the debugger, and immediately found out the issue. I changed to using a standalone object to represent all of the input assembler state within the engine, including all of the available vertex buffer slots. To set up the situation, there are a maximum of D3D11_IA_VERTEX_INPUT_RESOURCE_SLOT_COUNT slots in the IA, which is currently defined as 32 available slots... I typically reference resources in the engine with a smart pointer to a proxy object. The proxy object contains the indices of a resource, plus any resource views that it would need. The proxy is used by applications as a very easy way to reference several pieces of data as one, and has overall worked out very well.

To get to the point, I was declaring an input assembler state object on the stack in each draw call, which initialized the state object to have null pointers for all of those 32 vertex buffer slots. Even though I was only using a single vertex buffer, I was still initializing all the other slots to null, which amounts to 32 assignments of the smart pointer. With a little math, we can see what was going on: 32 x 4 rendering passes x 200 = 25,600 references per frame.

In the end, I simply switched the stored state to use directly the index of the vertex buffer (since the input assembler doesn't use resource views, there is no need to use the proxy object anyways). Just a few short changes popped the speed right back up to where it should have been. So the moral of the story is this - smart pointers are only as smart as the person that is using them, and sometimes (especially in my case) they end up being not so smart

Anyways, this has opened my eyes to some state management issues with Hieroglyph 3, which I am now working on. My goal is to reduce the number of API calls to as few as possible, all the while properly managing the cached pipeline state with respect to multithreaded rendering... This will be the subject of my next post!

In my case, it was the creation of the smart pointers (boost::make_shared()) that was part of the slowdown.

Smart pointers are very good, and I use them alot in my code, but they shouldn't just be used with a "replace every pointer with a smart pointer!" line of thought. Like everything in C++, used properly, they are of great benefit. Used improperly, your head asplodes.