Apatriarca is right about modern OpenGL use practices, but to answer your actual question:

Yes, you've got the gist of it. Blit your software-generated buffer to this texture with the correct format specifiers. Then render the texture as a full-screen quad. You generally don't need a glFlush but rather just use your platform API's Present call which will implicitly flush before buffer swapping (GL doesn't do this itself, you need to use SDL/GLUT/GLFW/SFML/GLX/AGL/WGL/whatever).

You can relatively easily out-perform ancient Windows APIs if you know what you're doing. You'll naturally do significantly better with a real GPU rendering engine, of course.

Allright much tnx for it,

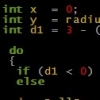

Can you maybe answer, i am urged to know, what api call moves the RAM pixet array into a vram memory,Is this glTexImage2D ? If I would like to blit I just need to call it each frame?

Also this flushing stuff is not clear, I previously used pure winapi + ogl (old version), so I just need to flush or do nothing or do something else?

Also this is also question about direct X. Never used it so

i got very weak idea, If i would like do it in direct x i should

do it like here in ogl by uplowading te texture to quad or

there is other way? (I heard something about pointers to

surfaces in directx, like it would be something other.. but

i am not sure) can such directx blit outperform ogl blit?