once time ago i was threading (paralelizing) some simple and ugly raytracer of my

- it was veryeasy as it was only reading the shared immutable scene data and wrote pixels to distinct rectangles on the screen - so no collision

When paralelizing the rasterizer situation looks different - as scene data is also immutable and no problem here screen output will be heavy overlaying (overlaping) *

so how to do that?

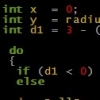

I was wondering if i should for example make each thread rasterize to its own set of frame and depth buffers that at last stage - "depth add" those buffers into one

* i wonder how efect can it bring if run without any locking or something - will it be some snow storm on the screen or only very rare pixel failures, or what?

does such coliding acces to framebufer only brings some visibility artifacts or it

does bring also some slowdown (does heavy shared acces to some ram array without locking make some slowddowns?)