Hey,

I found a very interesting blog post here: https://bartwronski.com/2017/04/13/cull-that-cone/

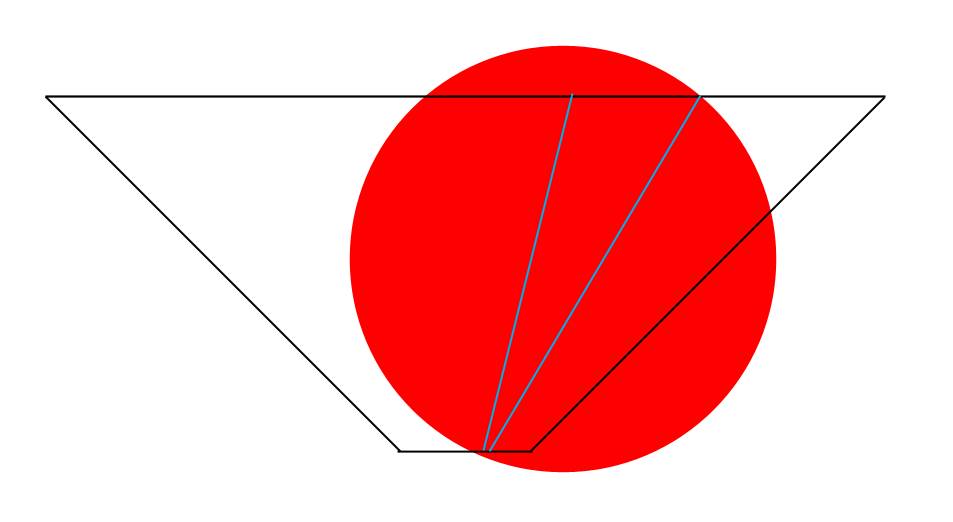

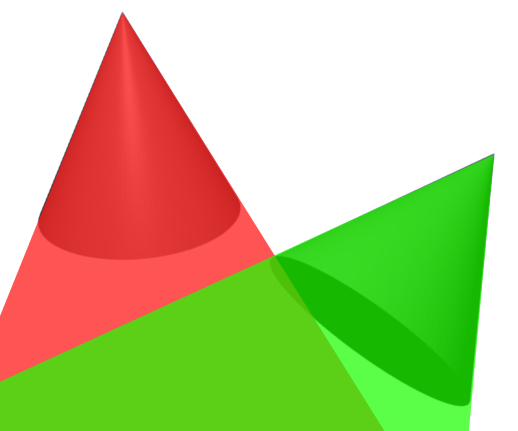

However, I didn't really got how to use his "TestConeVsSphere" test in 3D (last piece of code on his post). I have the frustumCorners of a 2D Tile cell in ViewSpace and my 3D Cone Origin and Direction, so where to place the "testSphere"? I thought about to also move the Cone into viewspace and put the sphere to the Center of the Cell with the radius of half-cellsize, however what about depth? A sphere does not have inf depth?

I am missing anything? Any Ideas?

Thx, Thomas