I'm teaching myself OpenGL and trying to do some stuff with fog and texturing, and I've gotten some strange results. I'm using python, but the OpenGL bindings look about the same as C if that's what you're familiar with (minus semicolons and pointers, of course).

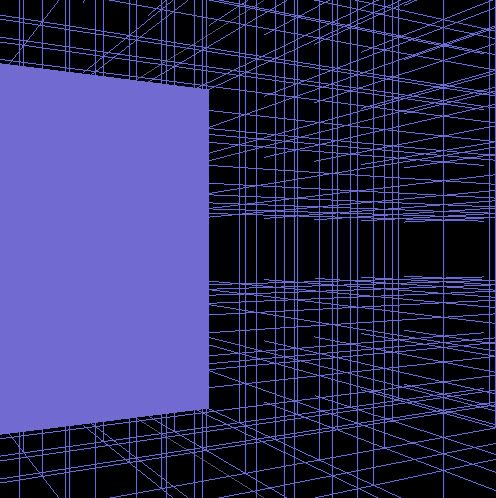

Pictures speak louder than words, so here's what this code produces (called from glut idle callback function):

glFogi(GL_FOG_MODE, GL_EXP)

glFogfv(GL_FOG_COLOR, [.4, .3, .8, 1])

glFogf(GL_FOG_DENSITY, 0.35)

glHint(GL_FOG_HINT, GL_DONT_CARE)

glFogf(GL_FOG_START, 1.0)

glFogf(GL_FOG_END, 5.0)

glEnable(GL_FOG);

That GL_QUAD actually has a texture on it. The texture is generated using some code I borrowed from http://www.pygame.org/wiki/Load_32-bit_BMP_with_Alpha with some (I thought) minor modifications to read 24 bits instead of 32 (since no bitmaps I've come across have alpha components) and just use a straight up 2d array of RBGA arrays instead of the pygame Surface object.

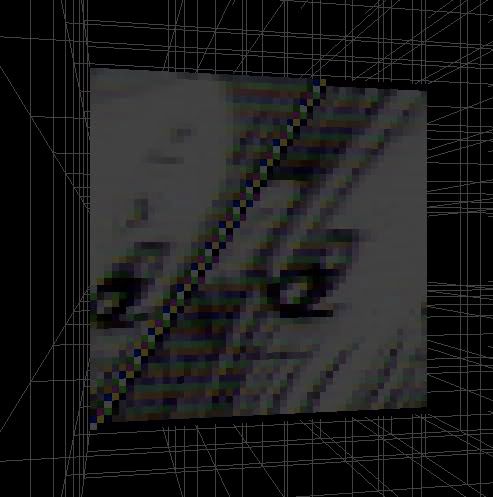

When I go to parse the pixels from this image:

I get this as a result:

(Mind, I had to screencap that source image to get it into a bmp format proper for parsing, apparently standards aren't strictly enforced).

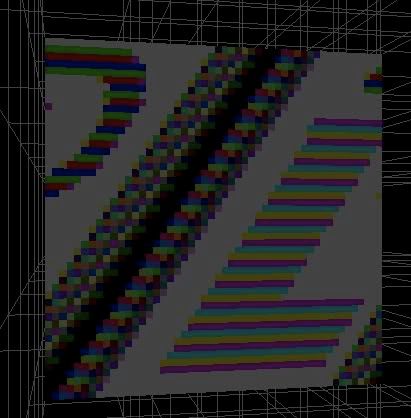

Similarly, using this source image:

produces this as a result:

Note that the anime girl above was flipped vertically, but this one was not. I'm not sure what that could be attributed to. Also, the repeating color stripes on the L confuse me a bit, as though MSPaint does some odd form of compression or something that might create ill-effects, since even if it's the wrong color the entire L should be uniform.

Here's the modified version of the pygame code I'm using to read the bitmap.

f = open( filename, 'rb' )

data = f.read()

f.close()

# Assert: this is a Windows BMP

if data[:2] != 'BM': raise Exception()

pixel_data_offset = get_word( data[ 10:14 ] )

bitmap_info_header = data[ 14:(14+40) ]

# Assert: this is a valid Windows BMP

if get_word( bitmap_info_header[ :4 ] ) != 40: raise Exception()

# Assert: image type is BI_RGB (not compressed)

if get_word( bitmap_info_header[ 16:20 ] ) != 0: raise Exception()

width = get_word( bitmap_info_header[ 4:8 ] )

height = get_word( bitmap_info_header[ 8:12 ] )

bitcount = get_word( bitmap_info_header[ 14:16 ] )

# Assert: bitmap has bitdepth of 32-bits per pixel

if bitcount != 24: raise Exception()

is_inverted = (height < 0)

if is_inverted: height = -height

# Load and store the image data ..........................................

result = list()

try:

for y in xrange( height ):

result.append(list())

for x in xrange( width ):

result[y].append(list())

result[y][x].append(

[ord( data[ pixel_data_offset + 1 ] ), # Red

ord( data[ pixel_data_offset + 0 ] ), # Green

ord( data[ pixel_data_offset + 2 ] ), # Blue 0] # Alpha

)

pixel_data_offset += 3

To display the texture in the display callback I'm using:

glTexParameteri (GL_TEXTURE_2D, GL_TEXTURE_MAG_FILTER, GL_NEAREST)

glTexParameteri (GL_TEXTURE_2D, GL_TEXTURE_MIN_FILTER, GL_NEAREST)

glTexImage2D ( GL_TEXTURE_2D, 0, GL_RGBA, 50, 50, 0, GL_RGBA, GL_UNSIGNED_BYTE, test)

glEnable( GL_TEXTURE_2D )

glBegin( GL_QUADS )

glTexCoord2f ( 0.0, 0.0 )

glVertex3f( 0, 0, -100 )

glTexCoord2f( 1.0, 0.0 )

glVertex3f( 50, 0, -100 )

glTexCoord2f( 1.0, 1.0 )

glVertex3f( 50, 50, -100 )

glTexCoord2f( 0.0, 1.0 )

glVertex3f( 0, 50, -100 )

glEnd()

glDisable( GL_TEXTURE_2D )

This seems straightforward enough that I don't think there's an issue there.

Feel free to cry RTFM, but please point me to TFM, since that pygame link is all I've found on reading RGB data from bitmaps, and they aren't the greatest at documentation.

<tangent> Also, what's the html tag for a scroll box for code? Also also, being able to preserve my tabs so that python code is understandable would be nice >_> </tangent>

That GL_QUAD actually has a texture on it. The texture is generated using some code I borrowed from http://www.pygame.org/wiki/Load_32-bit_BMP_with_Alpha with some (I thought) minor modifications to read 24 bits instead of 32 (since no bitmaps I've come across have alpha components) and just use a straight up 2d array of RBGA arrays instead of the pygame Surface object.

When I go to parse the pixels from this image:

That GL_QUAD actually has a texture on it. The texture is generated using some code I borrowed from http://www.pygame.org/wiki/Load_32-bit_BMP_with_Alpha with some (I thought) minor modifications to read 24 bits instead of 32 (since no bitmaps I've come across have alpha components) and just use a straight up 2d array of RBGA arrays instead of the pygame Surface object.

When I go to parse the pixels from this image:

(Mind, I had to screencap that source image to get it into a bmp format proper for parsing, apparently standards aren't strictly enforced).

Similarly, using this source image:

(Mind, I had to screencap that source image to get it into a bmp format proper for parsing, apparently standards aren't strictly enforced).

Similarly, using this source image:

produces this as a result:

produces this as a result:

Note that the anime girl above was flipped vertically, but this one was not. I'm not sure what that could be attributed to. Also, the repeating color stripes on the L confuse me a bit, as though MSPaint does some odd form of compression or something that might create ill-effects, since even if it's the wrong color the entire L should be uniform.

Here's the modified version of the pygame code I'm using to read the bitmap.

f = open( filename, 'rb' )

data = f.read()

f.close()

# Assert: this is a Windows BMP

if data[:2] != 'BM': raise Exception()

pixel_data_offset = get_word( data[ 10:14 ] )

bitmap_info_header = data[ 14:(14+40) ]

# Assert: this is a valid Windows BMP

if get_word( bitmap_info_header[ :4 ] ) != 40: raise Exception()

# Assert: image type is BI_RGB (not compressed)

if get_word( bitmap_info_header[ 16:20 ] ) != 0: raise Exception()

width = get_word( bitmap_info_header[ 4:8 ] )

height = get_word( bitmap_info_header[ 8:12 ] )

bitcount = get_word( bitmap_info_header[ 14:16 ] )

# Assert: bitmap has bitdepth of 32-bits per pixel

if bitcount != 24: raise Exception()

is_inverted = (height < 0)

if is_inverted: height = -height

# Load and store the image data ..........................................

result = list()

try:

for y in xrange( height ):

result.append(list())

for x in xrange( width ):

result[y].append(list())

result[y][x].append(

[ord( data[ pixel_data_offset + 1 ] ), # Red

ord( data[ pixel_data_offset + 0 ] ), # Green

ord( data[ pixel_data_offset + 2 ] ), # Blue 0] # Alpha

)

pixel_data_offset += 3

To display the texture in the display callback I'm using:

glTexParameteri (GL_TEXTURE_2D, GL_TEXTURE_MAG_FILTER, GL_NEAREST)

glTexParameteri (GL_TEXTURE_2D, GL_TEXTURE_MIN_FILTER, GL_NEAREST)

glTexImage2D ( GL_TEXTURE_2D, 0, GL_RGBA, 50, 50, 0, GL_RGBA, GL_UNSIGNED_BYTE, test)

glEnable( GL_TEXTURE_2D )

glBegin( GL_QUADS )

glTexCoord2f ( 0.0, 0.0 )

glVertex3f( 0, 0, -100 )

glTexCoord2f( 1.0, 0.0 )

glVertex3f( 50, 0, -100 )

glTexCoord2f( 1.0, 1.0 )

glVertex3f( 50, 50, -100 )

glTexCoord2f( 0.0, 1.0 )

glVertex3f( 0, 50, -100 )

glEnd()

glDisable( GL_TEXTURE_2D )

This seems straightforward enough that I don't think there's an issue there.

Feel free to cry RTFM, but please point me to TFM, since that pygame link is all I've found on reading RGB data from bitmaps, and they aren't the greatest at documentation.

<tangent> Also, what's the html tag for a scroll box for code? Also also, being able to preserve my tabs so that python code is understandable would be nice >_> </tangent>

Note that the anime girl above was flipped vertically, but this one was not. I'm not sure what that could be attributed to. Also, the repeating color stripes on the L confuse me a bit, as though MSPaint does some odd form of compression or something that might create ill-effects, since even if it's the wrong color the entire L should be uniform.

Here's the modified version of the pygame code I'm using to read the bitmap.

f = open( filename, 'rb' )

data = f.read()

f.close()

# Assert: this is a Windows BMP

if data[:2] != 'BM': raise Exception()

pixel_data_offset = get_word( data[ 10:14 ] )

bitmap_info_header = data[ 14:(14+40) ]

# Assert: this is a valid Windows BMP

if get_word( bitmap_info_header[ :4 ] ) != 40: raise Exception()

# Assert: image type is BI_RGB (not compressed)

if get_word( bitmap_info_header[ 16:20 ] ) != 0: raise Exception()

width = get_word( bitmap_info_header[ 4:8 ] )

height = get_word( bitmap_info_header[ 8:12 ] )

bitcount = get_word( bitmap_info_header[ 14:16 ] )

# Assert: bitmap has bitdepth of 32-bits per pixel

if bitcount != 24: raise Exception()

is_inverted = (height < 0)

if is_inverted: height = -height

# Load and store the image data ..........................................

result = list()

try:

for y in xrange( height ):

result.append(list())

for x in xrange( width ):

result[y].append(list())

result[y][x].append(

[ord( data[ pixel_data_offset + 1 ] ), # Red

ord( data[ pixel_data_offset + 0 ] ), # Green

ord( data[ pixel_data_offset + 2 ] ), # Blue 0] # Alpha

)

pixel_data_offset += 3

To display the texture in the display callback I'm using:

glTexParameteri (GL_TEXTURE_2D, GL_TEXTURE_MAG_FILTER, GL_NEAREST)

glTexParameteri (GL_TEXTURE_2D, GL_TEXTURE_MIN_FILTER, GL_NEAREST)

glTexImage2D ( GL_TEXTURE_2D, 0, GL_RGBA, 50, 50, 0, GL_RGBA, GL_UNSIGNED_BYTE, test)

glEnable( GL_TEXTURE_2D )

glBegin( GL_QUADS )

glTexCoord2f ( 0.0, 0.0 )

glVertex3f( 0, 0, -100 )

glTexCoord2f( 1.0, 0.0 )

glVertex3f( 50, 0, -100 )

glTexCoord2f( 1.0, 1.0 )

glVertex3f( 50, 50, -100 )

glTexCoord2f( 0.0, 1.0 )

glVertex3f( 0, 50, -100 )

glEnd()

glDisable( GL_TEXTURE_2D )

This seems straightforward enough that I don't think there's an issue there.

Feel free to cry RTFM, but please point me to TFM, since that pygame link is all I've found on reading RGB data from bitmaps, and they aren't the greatest at documentation.

<tangent> Also, what's the html tag for a scroll box for code? Also also, being able to preserve my tabs so that python code is understandable would be nice >_> </tangent>